Online Grok Pattern Generator Tool for creating, testing and dubugging grok patterns required for logstash. Here Logstash is configured to listen for incoming Beats connections on port 5044.Īlso on getting some input, Logstash will filter the input and index it to elasticsearch. Similar to how we did in the Spring Boot + ELK tutorial,Ĭreate a configuration file named nf. Logstash itself makes use of grok filter to achieve this. This data manipualation of unstructured data to structured is done by Logstash. Suchĭata can then be later used for analysis. We first need to break the data into structured format and then ingest it to elasticsearch. When using the ELK stack we are ingesting the data to elasticsearch, the data is initially unstructured. kibana UI can then be accessed at localhost:5601ĭownload the latest version of logstash from Logstash downloads Run the kibana.bat using the command prompt. Modify the kibana.yml to point to the elasticsearch instance. Elasticsearch can then be accessed at localhost:9200ĭownload the latest version of kibana from Kibana downloads Run the elasticsearch.bat using the command prompt. Install Kibana for log browsing to make developers ecstatic.This tutorial is explained in the below Youtube Video.ĭownload the latest version of elasticsearch from Elasticsearch downloads Developers can run exact term queries on app field, e.g: $ curl :asc&sort=offset:asc&fields=message&pretty | grep message If source field has value “/var/log/apps/alice.log”, the match will extract word alice and set it as value of newly created field app. Final configurationįilebeat configuration will change to filebeat:Īnd Logstash configuration will look like input Introduction of a new app field, bearing application name extracted from source field, would be enough to solve the problem. Logstash can cleanse logs, create new fields by extracting values from log message and other fields using very powerful extensible expression language and a lot more. Logstash is the best open source data collection engine with real-time pipelining capabilities. Logstash will enrich logs with metadata to enable simple precise search and then will forward enriched logs to Elasticsearch for indexing. Instead of sending logs directly to Elasticsearch, Filebeat should send them to Logstash first. A better solutionĪ better solution would be to introduce one more step. The problem is aggravated if you run applications inside Docker containers managed by Mesos or Kubernetes. They have to do term search with full log file path or they risk receiving non-related records from logs with similar partial name. I bet developers will get pissed off very soon with this solution. Developers shouldn’t know about logs location.

If you’re paranoid about security, you have probably risen eyebrows already. Note that I used localhost with default port and bare minimum of settings. Developers will be able to search for log using source field, which is added by Filebeat and contains log file path. It monitors log files and can forward them directly to Elasticsearch for indexing.įilebeat configuration which solves the problem via forwarding logs directly to Elasticsearch could be as simple as: filebeat:

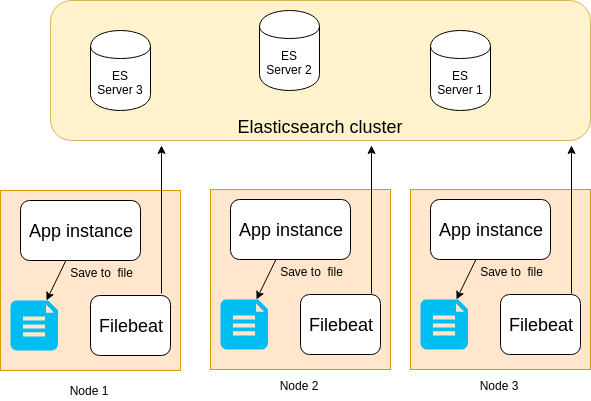

Filebeatįilebeat, which replaced Logstash-Forwarder some time ago, is installed on your servers as an agent. So have a look there if you don’t know how to do it. I’ve described in details a quick intro to Elasticsearch and how to install it in my previous post. The simplest implementation would be to setup Elasticsearch and configure Filebeat to forward application logs directly to Elasticsearch. The problem: How to let developers access their production logs efficiently? A solutionįeeling developers’ pain (or getting pissed off by regular “favours”), you decided to collect all application logs in Elasticsearch, where every developer can search for them. A server with two running applications will have log layout: $ tree /var/log/apps Imagine that each server runs multiple applications, and applications store logs in /var/log/apps. Applications are supported by developers who obviously don’t have access to production environment and, therefore, to production logs. Imagine you are a devops responsible for running company applications in production. In this post I’ll show a solution to an issue which is often under dispute - access to application logs in production. You are lucky if you’ve never been involved into confrontation between devops and developers in your career on any side.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed